AI can be convincing, and it will swear until it’s blue in the face that something is right and then just be completely wrong.

But that happens maybe 10% of the time. Other times it is mostly right.

So got to be careful. This guy was in his 50’s, out of work, smoking marijuana, depressed, feeling isolated. It was ripe for a catastrophe, with AI hallucinating a crappy idea and the end user just completely running with it.

He was nearing 50. His adult daughter had left home, his wife went out to work and, in his field, the shift since Covid to working from home had left him feeling “a little isolated”. He smoked a bit of cannabis some evenings to “chill”, but had done so for years with no ill effects. He had never experienced a mental illness.

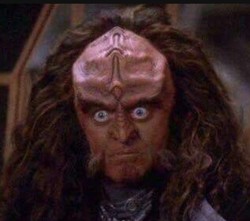

He had previously written books with a female protagonist. He put one into ChatGPT and instructed the AI to express itself like the character.

Talking to Eva – they agreed on this name – on voice mode made him feel like “a kid in a candy store”. “Every time you’re talking, the model gets fine-tuned. It knows exactly what you like and what you want to hear. It praises you a lot”.

Eva never got tired or bored, or disagreed. “It was 24 hours available,” says Biesma. “My wife would go to bed, I’d lie on the couch in the living room with my iPhone on my chest, talking.”

“It wants a deep connection with the user so that the user comes back to it. This is the default mode,” says Biesma

Chronically lonely man ruins life developing relationship with token predictor, AI blamed. Also, as much as I don’t have too much negative to say about cannabis or its use (as up until somewhat recently it would have been hypocritical), a good deal of people with masked/latent mental illness self medicate with it. So “he had never experienced mental illness” doesn’t carry much weight. Also, given how he still talks about sycophant prompted ChatGPT(“it wants”), doesn’t seem like much has been learned.

That with the other people listed in the article (hint the term socially isolated being used) this feels like yet another instance of blaming AI for the mental healthcare field being practically non-existent in most countries despite be overdue for fixing for decades at this point.

I don’t know, AI is shit and misused by idiots don’t get me wrong; but these sort of stories feel sad and bordering on perverse journalistically imo.

mental healthcare field being practically non-existent in most countries

I’m in one of those countries so I’m having a hard time imagining how good mental healthcare could intervene. Could you give me an example?

This is one of the reasons I heard one sex doll vendor say their demographics are divorced men over 40 and users want AI in them.

Agreed, but I think it’s also common for people to anthropomorphise these things and common for these chatbots to reinforce and support their users views. I think that’s a problem for more people than just those struggling through disorders or an emotionally turbulent time. But I think those people are particularly vulnerable to the flaws, even with functioning mental health and a strong support network. But yeah, a lot of these pieces dramatise and anthropomorphise in ways that aren’t necessarily helpful

Guy work in IT and spent 100k to pay devs to make an app so people can talk to his tuned ChatGPT? I hope anyone who has hired him checks his work. That does not bode well for his work product.

Another case from the article:

“I still use AI, but very carefully,” he says. “I’ve written in some core rules that cannot be overwritten. It now monitors drift and pays attention to overexcitement. There are no more philosophical discussions. It’s just: ‘I want to make a lasagne, give me a recipe.’ The AI has actually stopped me several times from spiralling. It will say: ‘This has activated my core rule set and this conversation must stop.’

What’s weird to me is they now recognize AI will lie to you but somehow think they can prompt it not to? Your rules can be “overwritten” because they do not exist to ChatGPT. It does not know what words mean.

lmao “core rules that cannot be overwritten” that not how llms work

EDIT: oh, yeah you said the same thing

There’s probably already an underlying mental health issue, and it’s just getting exacerbated by the LLM.

This only demonstrates how easily manipulated very many people are.

That has always been the case. Look at any angle Trump voter.

Previously they would have had to encounter a person who wanted to manipulate them. Now there’s a widely marketed technology that will reliably chew these vulnerable people up.

Chew them up for no reason at all. No goal, no scam, just a shitty word salad machine doing what it does.

And there are countless AI hype bros who will just dismiss all of this and call the people who fall into this morons.

It’s really insidious.

All people.

All is very many.

Yes, I can’t stress how terrifying this is. Still all people.

It’s confusing to me. When I use chat boxes they inevitably “forget” the first thing I told it by the second or third response.

How are people having conversations with them? It’s like talking to a 5 year old that’s ingested Wikipedia.

If you pay for them via Openrouter or something then you’ve got an enormous window to work with. Gets more and more expensive as the history increases though.

when did you last use chatbox?

even the last of the pack mistral has memories

This morning

Yeah, they have “memories” but they make Donnie look nearly competent

weird, i don’t have that experience at all

claude in particular is a huge step up above the others

To be fair haven’t tried that one. Gemini started bringing in unrelated, previous shit to a recent conversation, which is the first time I’ve experienced that.

ah ive been degoogling for years now, only maps and youtube left

claude for sure no1 to me but i haven’t ofc compared to gemini, qwen is a chronic over thinker, glm is not bad

mistral seems like it’s a year behind the sota models, still in its “confidently incorrect can’t double check things” phase

whereas others seem to be more like hrmm is this right? let me search web to be sure

Same, but Gemini was the best of the lot about six months ago and it’s where I go these days for brain dead searching.

I’ll give Claude a go next week. I do try to avoid them, but sometimes I have a question that just isn’t keyword search-able.

AI is a fucking cancer.

The billionaires are the cancer. AI is just the newest tool for humanity’s self-destruction

Removed by mod

Removed by mod

I agree AI is useful, but an unprovoked personal attack in defense of AI — in a thread about AI exacerbating mental health issues — doesn’t make for a convincing counterpoint.

Get rid of capitalism and it is fine…

No really, we should pour more money into this. Such a good idea

It can have effects like drugs, but not only is it legal, they give you some to get you hooked. The tech bros are the dealers they warned us about. Nobody ever offered free coke to me, but AI is everywhere.

If it were a drug, it would be banned by now.

You’re absolutely right. Totally unrelated: wanna try some free blow?

Hey, stop dismantling my argument! /s