Grinding in Oblivion you say?

Hi, I’m Eric and I work at a big chip company making chips and such! I do math for a job, but it’s cold hard stochastic optimization that makes people who know names like Tychonoff and Sylow weep.

My pfp is Hank Azaria in Heat, but you already knew that.

Grinding in Oblivion you say?

np, im just screaming into the void on this beautiful Monday morning

I couldn’t find further holes in it

Here’s a couple:

Daniel Kokotlajo, the actual ex-OpenAI researcher

Unclear to me what Daniel actually did as a ‘researcher’ besides draw a curve going up on a chalkboard (true story, the one interaction I had with LeCun was showing him Daniel’s LW acct that is just singularity posting and Yann thought it was big funny). I admit, I am guilty of engineer gatekeeping posting here, but I always read Danny boy as a guy they hired to give lip service to the whole “we are taking safety very seriously, so we hired LW philosophers” and then after Sam did the uno reverse coup, he dropped all pretense of giving a shit/ funding their fan fac circles.

Ex-OAI “governance” researcher just means they couldn’t forecast that they were the marks all along. This is my belief, unless he reveals that he superforecasted altman would coup and sideline him in 1998. Someone please correct me if I’m wrong, and they have evidence that Daniel actually understands how computers work.

I think Demis Hassabis (chemistry for alpha fold) has said the chance of AI killing all of humanity is somewhere between 0 and 100%.

Terrible news: the worst fella we know just dropped a banger post ;_;

Banger post title

When the cubbies won the series, I knew it meant that Trump 2016 was a lock. A Chicago pope can only mean Trump 2028 confirmed 😭

finger guns activated 🟩 👉👉

Video of interview with op’s old nemisis: https://www.youtube.com/watch?v=urcL86UpqZc&t=172s

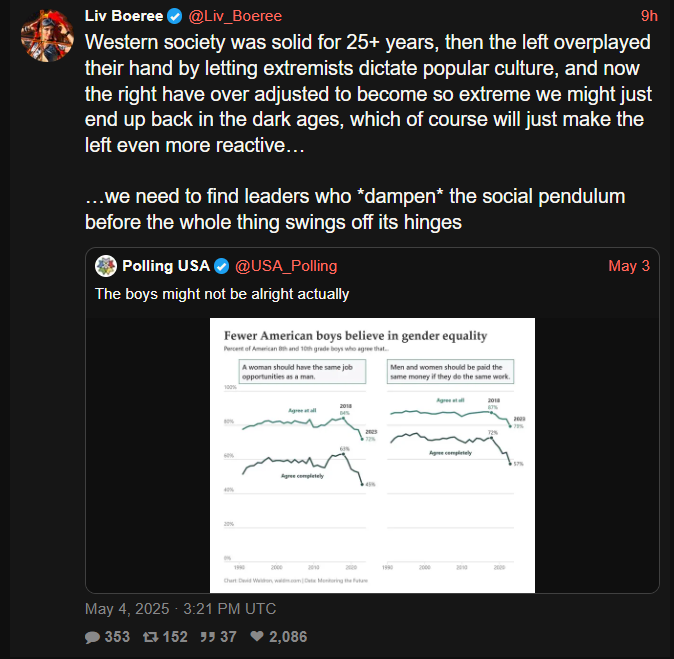

There’s so much to hate with this, but for some reason what really irks me is the “overplayed their hand” b.c. she was a poker player so she has to view all human interaction through the lens of gAmE tHeOrY instead of, you know, believing people should have human rights.

Like you just know in a parallel universe she’s yapping about how “the West has fallen b.c. leftist pushed their pawns too far” or “I have to vote for elon for president b.c. the left’s clerics exhausted all their healing mana”

More big “we had to fund, enable, and sane wash fascism b.c. the leftist wanted trans people to be alive” energy from the EA crowd. We really overplayed our hand with the extremist positions of Kamala fuckin Harris fellas, they had no choice but to vote for the nazis.

(repost since from that awkward time on Sunday before the new weekly thread)

More big “we had to fund, enable, and sane wash fascism b.c. the leftist wanted trans people to be alive” energy from the EA crowd.

I did read one of Carlo’s pop sci books back in the day, was a nice read. Iirc he’s like one of the dudes all in on loop quantum gravity. Bet you’d know more about this than I do 😅

deleted by creator

Thanks for sharing this. Sent it to my mother who has spent a large part of her career working with children who require special care like this, she really enjoyed it. Her take:

“Thanks for this. It is what I have done for 12 years. I wonder why they didn’t use the devices that are already out there that do these things and can be customized. It is very cool tho” <- (my mommy)

Also, man why do I click on these links and read the LWers comments. It’s always insufferable people being like, “woe is us, to be cursed with the forbidden knowledge of AI doom, we are all such deep thinkers, the lay person simply could not understand the danger of ai” like bruv it aint that deep, i think i can summarize it as follows:

hits blunt “bruv, imagine if you were a porkrind, you wouldn’t be able to tell why a person is eating a hotdog, ai will be like we are to a porkchop, and to get more hotdogs humans will find a way to turn the sun into a meat casing, this is the principle of intestinal convergence”

Literally saw another comment where one of them accused the other of being a “super intelligence denier” (i.e., heretic) for suggesting maybe we should wait till the robot swarms coming over the hills before we declare its game over.

:'( sad one. feel bad for the bebe, being raised by insane people.

Kind of knew that after Claude plays pokemon went semi viral, it was going to immediately get goodhart’d. i also saw the usual doomers be like BY END OF YEAR AGENTS WILL BEAT POKEMON, which I thought was a severe underestimate at the time- they were undoubtably basing their projection based off the Anthropic people who posted a little chart showing how far each version of Claude made it, waiting for pokemon playing skill to emerge from larger and larger models, instead of thinking, hmm they are iteratively refining the customized tools as it gets stuck. Then after Gemini ‘beat’ the game I read a disappointed response from an RL guy that said after trying to replicate the results, they concluded Googe’s set up was basically 90% harness for the model, 10% model despite the Google team basically implying it was raw pixels-to-action.